Norma: Value-Checking for Political Speech

With Jesse, I received some funding from Cosmos Institute and FIRE to work on a project called Norma: Value-Checking for Political Speech.

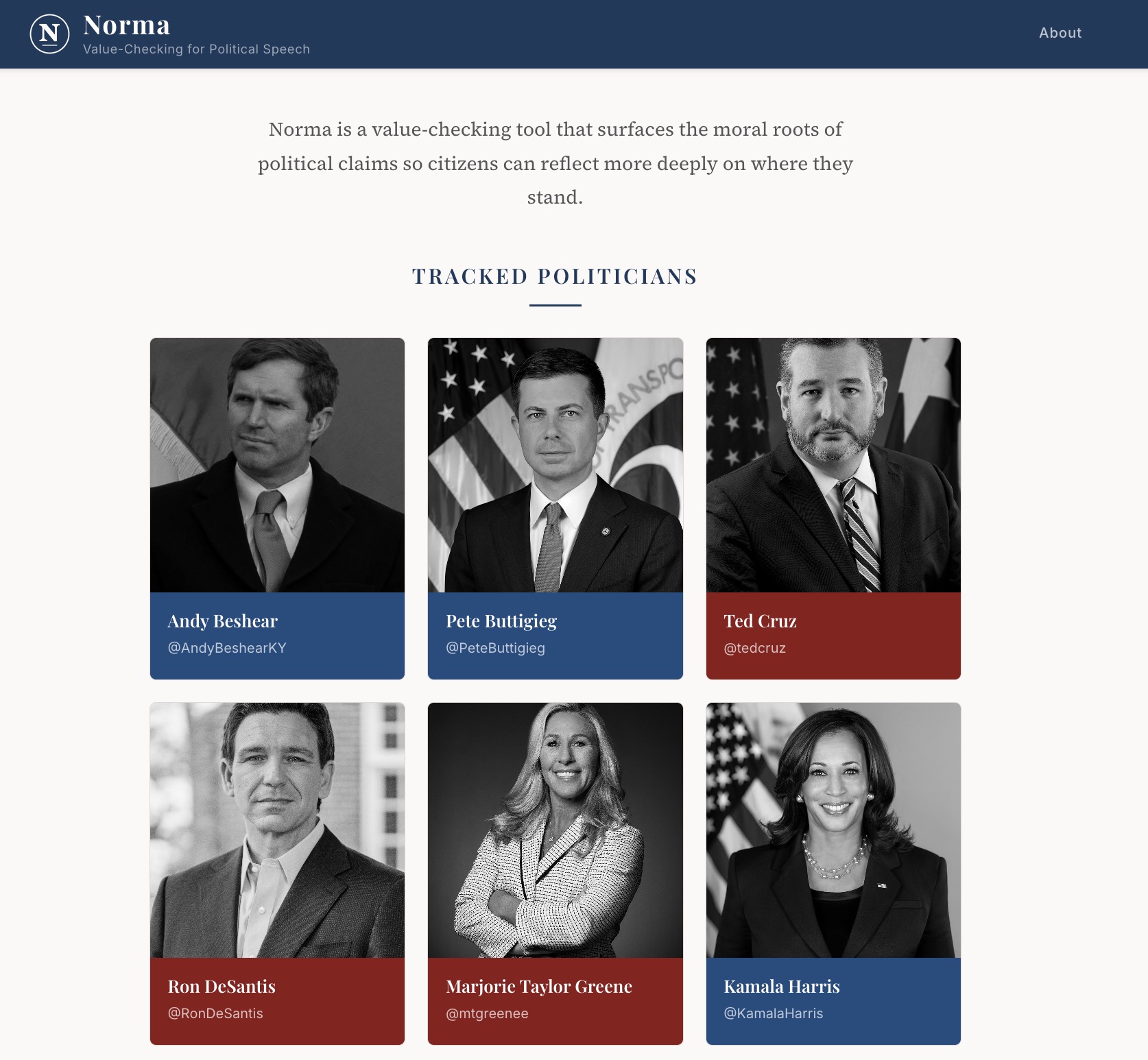

A version of the project is live here. Here is a screenshot of the interface:

We wrote about the project concept here. Generally, I think the very general direction of the project is more interesting than the project implementation itself. Even subtracting out the “AI” part of the project, I think it’s interesting to consider whether/how it might be useful provide more support for citizens in reasoning about non-fact-based claims.

Below I will re-post the remainder of the project write-up; the write-up was written in somewhat promtional terms for fundraising purposes, but still gets at the main ideas.

About Norma

Norma surfaces the hidden moral and philosophical commitments in political speech. Rather than judging whether a statement is true or false, Norma clarifies the values underlying a claim and connects them to traditions of philosophical debate, helping readers reflect more deeply on where they stand.

The Problem

Today’s tools for governing online political discourse—such as community notes, fact-checking tools, and dis/misinformation moderation—focus heavily on ensuring the factual accuracy of public claims. But facts alone do not settle questions of value.

The normative decision of what we ought to do on the basis of shared facts—what policies to enact, which candidates to support, what principles to uphold—is treated as the domain of individual preference and persuasion. Citizens often find themselves reacting along partisan lines without the resources to articulate why they agree or disagree at the level of moral reasoning. This contributes to polarization and shallow discourse.

Philosophers have long developed methods for clarifying and reasoning about normative commitments; however, these resources remain largely locked in academic references, inaccessible in the fast-moving context of contemporary public debate.

How Norma Works

Norma is an LLM-powered value-checking tool. It ingests normative political statements—tweets, op-eds, speeches—and produces short annotations that:

- Identify the underlying moral values at stake (e.g. appeals to fairness, liberty, patriotism).

- Connect those assumptions to relevant philosophical frameworks and traditions of debate.

- Expose tensions or counter-arguments to encourage reflection rather than partisanship.

Our Vision

Norma does not aim to persuade users of a particular position; instead, it aims to expand the space for inquiry, equipping citizens with intellectual tools to better understand where they stand and why.

By making visible the underlying value structures across different debates, Norma may surface unexpected overlaps or coalitions. Actors who appear opposed on the surface may in fact share normative commitments, meaning that unlikely alliances could form around principles that contemporary political identities obscure. In this way, Norma may help illuminate new possibilities for political cooperation, or at least highlight the complexity of existing divides.

The above framing is simplistic in a number of ways, including in its naive division of “facts” and “values”. When we presented this project at Cosmos demo day, there were some questions about this aspect of the framing.

In general, I am intellectually sympathetic to complicating these kinds of divisions.

However, at the same time, for the purposes of Norma, I think there’s also an argument that we can sidestep some of these foundational conceptual questions. Taking a constructivist approach, this line of what is a “fact” that can consequently be “fact-checked” is already one that exists in the world, and is deeply naturalized / taken for granted by digital platforms and other institutions like, say, Snopes or Politifact (among many others). There are certain parts of, say, a Bernie tweet that an institution like Snopes thinks can be “fact-checked” and other parts that cannot be.

So one way to think about what we are up to with Norma and this “value-checking” idea is to suggest that these sorts of institutions (or other builders, platforms etc.) might consider how they can support citizens in reasoning about whatever they imagine is on the other side of that existing boundary of “fact” (regardless of whether that existing line is one that is philosophically defensible).

This doesn’t and shouldn’t preclude engaging with the normativity that’s inherent in the “facts” themselves. Instead, it raises a separate question about where and when we think it is useful to provide reasoning support to citizens in public contexts.

A final note is that this “value-checking” direction does not have much to do with AI or LLMs per se, even though our implementation uses this approach. It’s an interesting question what non-AI versions of this type of direction might look like – e.g. something like “Community Notes” that’s less oriented around fact-making.